Not all sales leads are equal, some are better than others. Lead scoring helps separate out those leads that might be the better than others. Still, high scoring leads may not convert to closed won opportunities. So, just how do you close the gap? Couple lead scoring with opportunity scoring to understand which leads are likely to progress to closed won.

Lead scoring models are frameworks and formulas for evaluating the quality of leads and prioritizing them based on the model output. Models are often point-based using factors where more points are awarded based on how closely leads align to the company’s ideal customer profile (ICP). Scoring is often done during the marketing stage well before prospects enter down-funnel sales pipelines. Models often use first party and third-party data to compute scores based on the prospect’s interactions with the company’s website and assets along with data about the prospect (such as title, role, etc.) and company details (such as industry, size, geography, etc.). The goal for most companies is to prioritize leads based on their score so that high scoring leads are responded to first and fast. Those high scoring leads are the ones deemed by the model to most likely buy based on past outcomes for similarly scored leads.

Often companies will develop lead scoring models and encode them into their marketing and prospecting database and rarely change the models. In the ideal scenario demographic and behavior lead scoring is dynamic. Leads are rescored whenever data has changed in the database and whenever there are interactions – inbound, outbound, or observed. Non-dynamic scoring models that run only once when leads are first entered into the database will result in stale scores and provide false results.

According to a heavily relied upon Marketing Sherpas research report from 2013-2014, B2B data decays at an annualized rate of 22.5% or 2.1% per month. That means that at any point in time, a company’s customer relationship management (CRM) system is only 80% accurate and has 20% bad data (the Pareto Principle at work once again). While the study is out-of-date (almost ten years old), the decay rate is likely much higher today considering the “Great Resignation” (2021-2022) resulting in significant impacts on the accuracy of company databases.

The 1-10-100 Rule is often used to calculate the increasing costs of action over time. The rule refers to “the cost of quality.” When applied to lead acquisition and B2B database maintenance, the rule suggest that it takes one dollar to verify a lead when entered into the database, ten dollars to clean it up later, and one hundred dollars if nothing is ever done with it. Thus, the costs associated with prevention, correction, and failure to correct the bad data increases the longer leads sit and accumulate in company databases.

Incorporating the age of the lead along with the time that has passed since last modified should be consider an important factor in any lead scoring model. Thus, older leads would have self-destruct if the number of days since creation or last modified were deductions to the lead score.

Lead scoring should consider prospect activity that may result in scores increasing, as well as scores decreasing. Engagement with or by leads which would be reflected in positive changes to the lead scores. However, if leads opt out, there should a substantial negative change on the scores. Opting out and hanging up when called are small actions that often go unnoticed but are outsized indicators of a lead’s intentions to buy.

One approach would be to purge any low scoring leads or leads that obtain negative scores. All fresh leads start with a score of 100, then each day that passes without activity or modification, the score is reduced by one until the score hits zero. Using a ticking time bomb scoring model, i.e., the older the lead the lower the score, sets the stage for leads to be removed once they hit a score of zero. These leads could be archived off rather than deleted altogether. While the cost of lead acquisition is already a sunk cost, the ongoing cost of maintenance or using the 1-10-100 rule of not maintaining the lead grows quickly.

Such actions would quickly remove bad data from company databases, keeping them fresh and up-to-date driven by the activity of the leads themselves and the actions of company LDRs, BDRs, SDRs and AEs.

It is common to find that companies score their leads once and never rescore them again. Furthermore, the activity by the business development representatives is never taken into account and lead scores are frozen. The most ideal circumstances would be that leads are rescored dynamically after every change in data, activity by the lead, and activity by the sales team. Lifecycle lead scoring takes all this into account. If low scoring leads are archived or purged from company CRMs, this leaves only fresh active leads.

Once company databases have been cleaned up and purged of low scoring stale leads, the next step is to deploy opportunity scoring. An opportunity score, like a lead score, is a prediction that an opportunity will convert into a closed won deal.

Opportunity scoring models – like lead scoring models – are frameworks and formulas for evaluating the quality of an opportunity and the likelihood that it will result in a closed won deal. Models are often point-based using factors where more points are awarded based on how closely opportunities align to the company’s ideal opportunity profile (IOP). IOPs are counterparts to the ICP. Company IOPs identify the characteristics of closed won deals and can include factors like time to close, products of interest, size of deals, terms and conditions, size of company, decision makers involved and their roles, and much more.

Dynamic opportunity scoring when used proactively can alert sales leadership to those opportunities that are increasingly at risk. Dynamic scoring rescores opportunities whenever any variable in the scoring algorithm changes. As a result, opportunities may get a higher or lower scores depending on what changed. Progression through opportunities stages at pace should be viewed as positively increasing opportunity scores. When opportunities stall, opportunity aging would have a negative impact on opportunity scores. Depending on which stage (prospecting, discovery/needs analysis, evaluation/proposal, or closing/negotiation) and the duration in that stage, time should have an outsized impact on opportunity scores with shorter times in stage having positive influence on the scores and longer time in stage having negative influence on the scores. Thus, the longer opportunities sit in stalled stages, the lower the opportunity scores signaling a decreased likelihood that these opportunities will ever close.

Conversion rates and scores are not enough for companies seeking optimal results. Tracking conversion rates at each stage of the marketing funnel and progression though the sales funnel is simple enough. No matter what percentage of leads convert from AQLs to MQLs, to SQLs, to HQLs, there will be high, medium, and low scoring leads that pass through the funnel that go on to become opportunities. Perhaps wishful thinking by sales development representatives. The same holds true for the percentage of opportunities that score low or high – some will always progress to closed won.

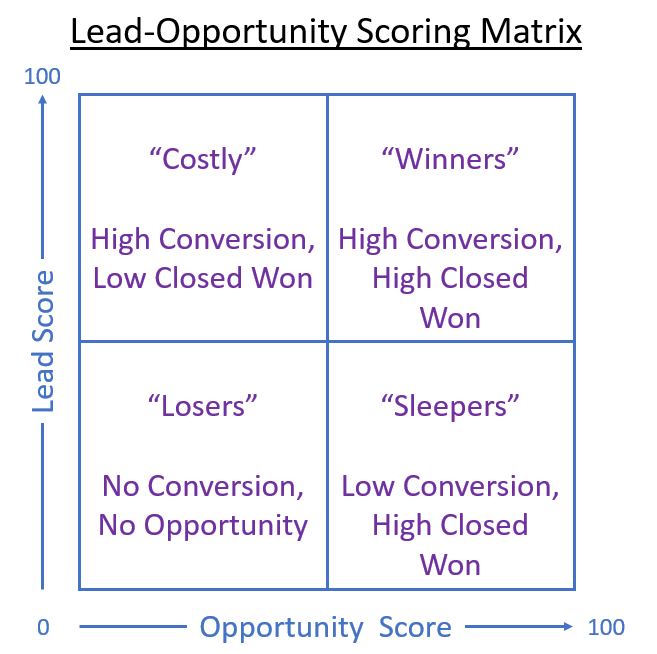

The Lead-Opportunity Scoring Matrix illustrated below helps identify where marketing and sales should focus their efforts to achieve maximum results. Losers includes those low scoring leads that never progress from AQL to MQL to SQL and no opportunity would ever be created. Costlys include those highly scored leads based on ICP or high engagement with digital assets and inside or field sales organizations and while opportunities are created and sales teams spend a lot of time working them, they do not close. Sleepers are those leads that do not have high digital or sales team engagement but when opportunities are created, they result in closed won deals. Winners are high scoring leads that result in closed won deals.

Machine learning (ML) algorithms can help build robust models based on past performance. These models can quickly and accurately determine which attributes and characteristics have a high likelihood of predicting closed won deals. But these models are only as good as the data used to train them. A similar disclaimer used in the financial services industry should be stamped on every ML model created: past performance may not predict future results. ML models can be unintentionally biased as a result of the data supplied; stale and bad data may not reflect what actually happens. The human element is also always in play. The current roster of inside sales or field sales teams, their tenure with the organization, and skills they possess could help reduce false positive errors in ML scoring algorithms. Sample size can also have an outsized impact on the model results. A small sample size reduces the power of the ML model while increasing the margin of error, which can render the model utterly useless. The British statistician George Box is often quoted as saying “All models are wrong, but some are useful.” – the result of garbage in, garbage out (GIGO), i.e., the quality of output is determined by the quality of the input. So, if companies have rubbish in their CRMs, lead scores and opportunity scores are going to result in flawed and nonsensical outputs.

Organizational discipline is required at all levels, from the front-line marketers generating leads, to the business development representatives that handle the data, and beyond to the account executives that manage the sales. Diligent data quality assessments with accountability for accuracy of the data goes a long way to eliminating scoring imperfections and inadequacies.

In Conclusion

There are clear benefits to organizations that couple lead scoring with opportunity scoring including increased marketing effectiveness, improved marketing and sales alignment, and increased revenue. With higher quality leads and opportunities comes increased conversion and end-to-end funnel throughput that saves time and reduces costs while adding additional layers of accountability for both marketing and sales.

So now you know that not all leads and not all opportunities are equal, that some are far better than others. If marketing scores leads and sales scores opportunities, the results should be increased revenue while reducing costs.

About the Author

Stephen Howell is a multifaceted expert with a wealth of experience in technology, business management, and development. He is the innovative mind behind the cutting-edge AI powered Kognetiks Chatbot for WordPress plugin. Utilizing the robust capabilities of OpenAI's API, this conversational chatbot can dramatically enhance your website's user engagement. Visit Kognetiks Chatbot for WordPress to explore how to elevate your visitors' experience, and stay connected with his latest advancements and offerings in the WordPress community.